The AI Innovation Cycle Started in 1956. Here's Why We Keep Forgetting That.

GPT, Claude, Gemini, the AI gold rush feels brand new. But the exact same hype, fear, and funding frenzy has happened three times before. We traced the pattern.

Key Takeaways

- •AI has gone through 3 major hype cycles in 70 years, we're in the fourth

- •The 1956 Dartmouth attendees predicted human-level AI within 20 years

- •Every AI boom follows the same pattern: breakthrough → overpromise → disappointment → quiet research → next breakthrough

- •The Transformer architecture behind GPT was a 2017 Google paper by 8 researchers

Root Connection

The term 'Artificial Intelligence' was coined at a 1956 Dartmouth workshop. Every AI hype cycle since, including the current one, follows the same boom-bust-rebirth pattern they unknowingly set in motion.

Timeline

1956Dartmouth Workshop: John McCarthy coins 'Artificial Intelligence', predicts human-level AI within a generation

1966ELIZA chatbot at MIT convinces users they're talking to a human therapist

1973First AI Winter: Lighthill Report says AI hasn't met expectations. Funding pauses.

1980Expert Systems boom: corporations pour billions into rule-based AI

1987Second AI Winter: Expert Systems lose momentum. AI enters a quiet research phase.

2012AlexNet wins ImageNet, deep learning revolution begins quietly

2017Google's 'Attention Is All You Need' paper invents Transformers, the architecture behind GPT

2022ChatGPT launches, 100 million users in 2 months. Third AI boom begins.

2026AI investment exceeds $200B annually. The pattern continues.

In the summer of 1956, ten researchers gathered at Dartmouth College in New Hampshire for a two-month workshop. They had a bold proposal: 'Every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.'

John McCarthy, who organized the workshop, needed a catchy name for the field. He chose 'Artificial Intelligence.' The group predicted they'd solve it in one generation. They were wrong by at least three generations, and counting.

What they did create, unknowingly, was a pattern. A cycle that has repeated with eerie precision every 20-30 years: breakthrough, hype, overpromise, disappointment, funding collapse, quiet research, next breakthrough.

“In 1966, ELIZA, a simple pattern-matching chatbot, convinced users they were talking to a real therapist. In 2026, we're having the exact same debate about Claude and ChatGPT. The technology changed. The human reaction didn't.”

The first cycle peaked in the 1960s. Programs like ELIZA at MIT could engage in surprisingly convincing conversations. ELIZA was a simple pattern-matching program that rephrased your statements as questions, 'I feel sad' became 'Why do you feel sad?', but users became emotionally attached. Some insisted they were talking to a real therapist.

Then came the cooldown. The 1973 Lighthill Report in the UK concluded that AI hadn't yet met its ambitious promises. Funding slowed. Research shifted to other priorities. This became the First AI Winter.

The second cycle emerged in the 1980s with Expert Systems, AI programs that encoded human expertise as rules. Corporations poured billions into building rule-based systems for medical diagnosis, financial analysis, and manufacturing. Japan launched a $850 million 'Fifth Generation Computer' project to achieve AI dominance.

By 1987, the momentum had faded. Expert Systems were rigid, expensive to maintain, and couldn't handle scenarios outside their narrow rules. The Second AI Winter settled in, a period of quiet but steady foundational research.

The third cycle began quietly in 2012 when a deep neural network called AlexNet won the ImageNet competition by a stunning margin. Deep learning worked, but only because GPUs had finally become powerful enough and data had finally become plentiful enough.

In 2017, eight researchers at Google published a paper titled 'Attention Is All You Need.' It introduced the Transformer architecture, the foundation of GPT, Claude, Gemini, and every major language model that followed. The paper has been cited over 100,000 times.

Now we're in the fourth cycle. ChatGPT gained 100 million users in two months. Investment exceeds $200 billion annually. Politicians are drafting regulations. Commentators offer predictions ranging from transformative breakthroughs to cautionary scenarios.

We've been here before. The technology is genuinely more powerful this time. But so was each previous generation compared to its predecessor. The question isn't whether this AI is impressive, it clearly is. The question is whether we'll learn from the pattern: stay grounded, keep investing in research, and build for the long term.

The root was planted at Dartmouth in 1956. The tree keeps growing, shedding leaves, and growing again. Understanding the root helps you see the cycles, and plan accordingly.

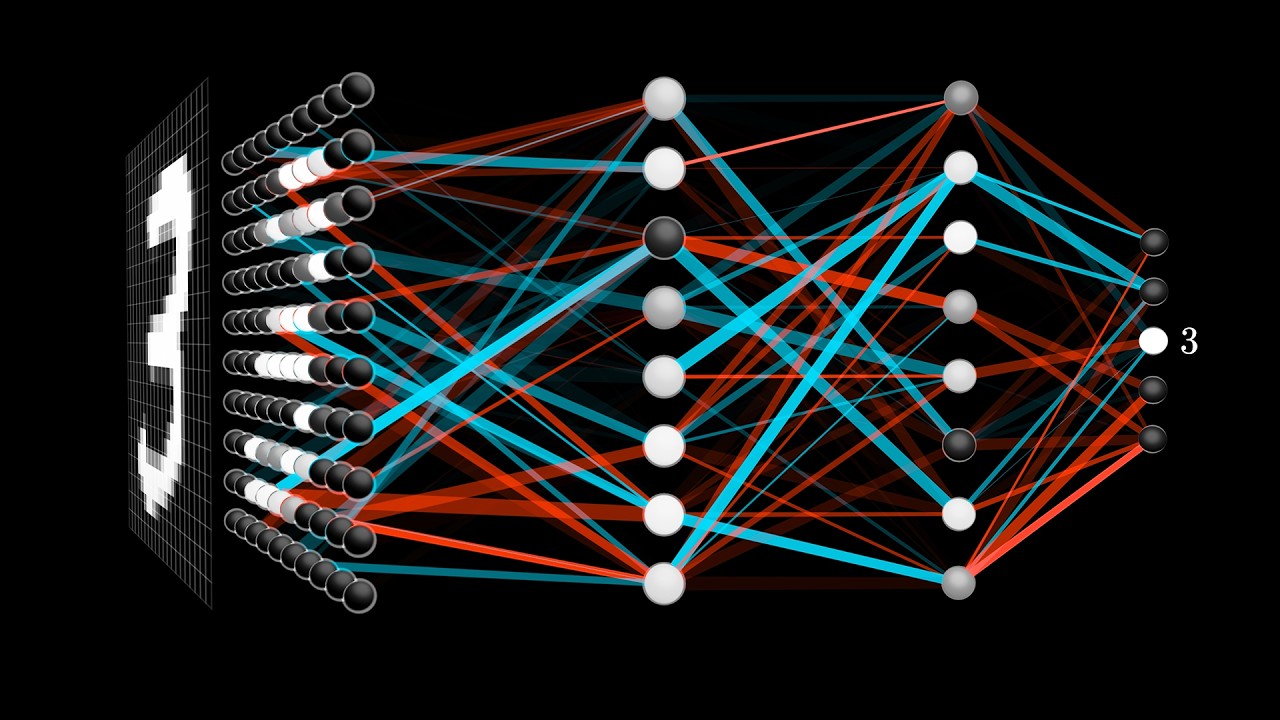

A visual deep-dive into how neural networks actually learn, the math behind the AI revolution

A simplified neural network: signals pass through layers of artificial neurons, adjusting weights until the right answer emerges

AI Research Papers Published Per Year (Thousands)

2012 marks the deep learning breakthrough; 2023 the generative AI explosion

Source: Semantic Scholar / ArXiv estimates

Enjoy This Article?

RootByte is 100% independent - no paywalls, no corporate sponsors. Your support helps fund education, therapy for special needs kids, and keeps the research going.

Support RootByte on Ko-fiHow did this make you feel?

Recommended Gear

View all →Disclosure: Some links on this page may be affiliate links. If you make a purchase through these links, we may earn a small commission at no extra cost to you. We only recommend products we genuinely believe in.

Framework Laptop 16

The modular, repairable laptop that lets you upgrade every component. The right-to-repair movement in action.

Flipper Zero

Multi-tool for pentesters and hardware hackers. RFID, NFC, infrared, GPIO - all in your pocket.

The Innovators by Walter Isaacson

The untold story of the people who created the computer, internet, and digital revolution. Essential tech history.

reMarkable 2 Paper Tablet

E-ink tablet that feels like writing on real paper. No distractions, no notifications - just thinking.

Keep Reading

Want to dig deeper? Trace any technology back to its origins.

Start Research